Solving Wordle Puzzles with Basic Python

Have you heard of Wordle? It’s a deceptively simple word puzzle. You’re asked to guess the word of the day, which is a five-letter word in English. If you guess wrong, you’re given a few hints: a letter in the word is green if your guess for that letter in that position is right; a yellow letter if that letter is present in the word, but not that position; and gray, if the letter is not in the word at all.

Deceptively simple, and yet quite challenging! Here’s how you can write a Wordle Solver with Python sets, list comprehensions, a bit of good luck!

The Challenge

Every day Wordle generates a new challenge word we must guess. As we only have six guesses – the site uses cookies to keep track of your progress – we must choose carefully!

On the face of it, there’s a number of hints we can exploit:

-

The word is exactly five letters long.

-

It must be English and only the alphabet – no punctuation, numbers or other symbols.

-

A guess yields clues:

-

A green letter if the character and position in the word is correct.

-

A yellow letter if the character is present in the word, but we chose the wrong position.

-

A gray letter if the character is not in the world at all.

-

-

There is a finite number of words, and what is a valid word is limited to the dictionary used by Wordle.

As I don’t want to try and extract the same dictionary as Wordle (that’s too easy) I’ll instead use a freely available dictionary that ships with Linux in /usr/share/dict/american-english. The dictionary is a text file with a single word for each line.

And with those rules and observations out of the way, we can start writing the algorithm for the Wordle solver.

Loading & Generating Words

To start, we’ll need the dictionary – feel free to use one of your own choosing if you like.

Next, we’ll need to encode the rules of the game:

import string

DICT = "/usr/share/dict/american-english"

ALLOWABLE_CHARACTERS = set(string.ascii_letters)

ALLOWED_ATTEMPTS = 6

WORD_LENGTH = 5We’re allowed six attempts; the word length is five, and we can use all available alphabetical characters.

I’m converting the allowable characters to a Python set() so I can use the many features present in sets around membership checks – but more on that in a moment.

From that, I can generate a set of words that match the rules:

from pathlib import Path

WORDS = {

word.lower()

for word in Path(DICT).read_text().splitlines()

if len(word) == WORD_LENGTH and set(word) < ALLOWABLE_CHARACTERS

}Here I’m using a set comprehension to generate a set of legitimate words. I’m doing it by using the excellent Path class to read directly from the file. If you’re not familiar with Path, I recommend you learn about Path as it’s an excellent feature.

But as you can see from the comprehension, I’m filtering the dictionary words so only those of the right length and the ones where the set of characters in a word is a subset of ALLOWABLE_CHARACTERS. In other words, only dictionary words that exist in the set of allowable characters is chosen.

English-Language Alphabetical Frequency Analysis

The thing about the English language is the uneven distribution of letters used in words. The letter E is used more frequently than X, for example. So if we can generate words with the most common letters, we’re more likely to get Wordle to match some or all the characters in the word. So the winning strategy for us it to come up with an algorithm for our Wordle solver that yields the most common letters in use in English.

Luckily, we have a dictionary of English words!

from collections import Counter

from itertools import chain

LETTER_COUNTER = Counter(chain.from_iterable(WORDS))The Counter class is a useful invention. It’s a modified dictionary that counts things. When you feed it values, it tracks the values as keys, and stores the number of occurrences its seen as that key’s value. Very useful for us, as we want the letter frequency.

To do this, I’m using a little-known function from the itertools module called chain. chain has a rather hidden method called from_iterable that takes a single iterable and evaluates it as a long chain of iterables:

I think an example explains it best:

>>> list(chain.from_iterable(["inspired", "python"]))

['i', 'n', 's', 'p', 'i', 'r', 'e', 'd', 'p', 'y', 't', 'h', 'o', 'n']Because strings are iterable also, and because WORDS is a set of strings (iterables) we split up a set (or list, etc.) into their constituent characters. It’s a useful property of strings; you can pass it through something like set to get the unique characters in a word:

>>> set("hello")

{'e', 'h', 'l', 'o'}- Sets are modelled on their mathematical cousins of the same name

-

So that means sets can only hold unique values – no duplicates – and they are unordered. That is why the set of characters is not in the same order as that of the string.

Sets possess many useful features, like testing if one set is wholly contained in another set (subset); getting the elements that overlap two sets (intersection); merging two sets (union); and so on.

So, we’ve counted the letters and it looks pretty good:

>>> LETTER_COUNTER

Counter({'h': 828,

'o': 1888,

'n': 1484,

'e': 3106,

's': 2954,

'v': 338,

# ... etc ...

})But that only gives us the absolute count of characters. Better, then, to have it split into the percentage of the overall total. Luckily, the Counter class has a handy total method that can give us the sum of all letter occurrences.

Turning it into a frequency table is easy:

LETTER_FREQUENCY = {

character: value / LETTER_COUNTER.total()

for character, value in LETTER_COUNTER.items()

}Python 3.10 added the Counter.total() method, so if you’re using an older version of Python, you can replace it with sum(LETTER_COUNTER.values()) which does the same.

Here I’m using a dictionary comprehension to enumerate each key and value of LETTER_COUNTER (it’s a modified dictionary) and dividing each value by the total overall count:

>>> LETTER_FREQUENCY

{'h': 0.02804403048264183,

'o': 0.06394580863674852,

'n': 0.050262489415749366,

'e': 0.10519898391193903,

's': 0.10005080440304827,

# ... etc ...

}And now we have a perfect accounting of the letter frequency of the subset of the dictionary that we consider to be valid Wordle words. Note that I did not do this against the full dictionary — just the parts we consider legitimate Wordle words. It’s unlikely to affect the rankings much, but that’s ultimately the set of words we act on.

Now we need a way of weighing each word so that we can suggest the most likely candidate(s) available. So we need to take our letter frequency table and make a word scoring function that scores how “common” the letters are in that word:

def calculate_word_commonality(word):

score = 0.0

for char in word:

score += LETTER_FREQUENCY[char]

return score / (WORD_LENGTH - len(set(word)) + 1)I am again exploiting the fact that a string is an iterable by iterating over every character in the word. I then get the frequency of each word and add it up; the total tally is then divided by the word length minus the number of unique characters (plus one, to prevent division of zero).

It’s not an amazing scoring function, but it’s simple and weighs words in such a way that more unique characters are given more weight than a word with fewer unique characters. Ideally we’d want as many unique, frequent characters as possible to maximize the likelihood of getting green or yellow matches in Wordle.

A quick test confirms that words with uncommon – and repeated – characters are weighted lower than words with frequent and more unique characters.

>>> calculate_word_commonality("fuzzy")

0.04604572396274344>>> calculate_word_commonality("arose")

0.42692633361558All we need now is a way of sorting and displaying these words so a human player can pick from them:

import operator

def sort_by_word_commonality(words):

sort_by = operator.itemgetter(1)

return sorted(

[(word, calculate_word_commonality(word)) for word in words],

key=sort_by,

reverse=True,

)

def display_word_table(word_commonalities):

for (word, freq) in word_commonalities:

print(f"{word:<10} | {freq:<5.2}")With sort_by_word_commonality I generate a sorted (highest-to-lowest) list of tuples, with each tuple containing the word and the calculated score for that word. The key I am sorting on is the score.

I am not using a lambda to get the first element; for simple stuff like this, I prefer operator.itemgetter which does the same thing.

I also added a quick display function to format the words and their score into a simple table.

Now for the solver.

Writing the Wordle Solver

Because I’m building this as a simple console application, I am going to use input() and print().

def input_word():

while True:

word = input("Input the word you entered> ")

if len(word) == WORD_LENGTH and word.lower() in WORDS:

break

return word.lower()

def input_response():

print("Type the color-coded reply from Wordle:")

print(" G for Green")

print(" Y for Yellow")

print(" ? for Gray")

while True:

response = input("Response from Wordle> ")

if len(response) == WORD_LENGTH and set(response) <= {"G", "Y", "?"}:

break

else:

print(f"Error - invalid answer {response}")

return responseThe functionality is simple. I want to ask the user for a WORD_LENGTH word they gave Wordle, and I want to record the response from Wordle. As there is only three possible answers (green, yellow, and gray) I encode it as a simple string of three characters: G, Y, and ?.

I also added error handling in case the user fumbles the input by looping repeatedly until the correct sequence is given. I do this by again converting the input to a set and then checking if that set of user input is a subset of the valid responses.

Filtering Green, Yellow and Gray Letters with a Word Vector

The Green letter rule indicates that the letter and its position in the word is correct. Yellow that the position is wrong, but that the letter is present in the word; and gray that the letter is not present anywhere.

Another way of interpreting that is that until Wordle starts telling us which letters are green, yellow, or gray, all possibilities exist.

word_vector = [set(string.ascii_lowercase) for _ in range(WORD_LENGTH)]Here I’m creating a list of sets, with the list size equal to the word length – i.e., five. Each element is a set of all lowercase English characters. By making one for each set, I can remove characters as we eliminate them from each position:

- Green letters are limited to just that letter

-

So that means if I encounter a green letter in position 2, then I can amend the set at that position to hold just that letter.

- Yellow letters imply the complement of that letter

-

So all letters but that letter is technically possible in that position. Removing the letter from the set at that position ensures we cannot pick words where that letter is set to that character.

- Gray letters imply the exclusion of that letter across the vector

-

Ergo, that character must be removed from all sets in the word vector.

Ideally, our Wordle solver will try to find as many green letters as possible, as that’s naturally the best type of match.

Now I need a function that tells me if a word matches the word vector. There’s a number of ways you can do this, but this is nice and simple:

def match_word_vector(word, word_vector):

assert len(word) == len(word_vector)

for letter, v_letter in zip(word, word_vector):

if letter not in v_letter:

return False

return TrueThis approach uses zip to pairwise match each character in the word, and each character in the word vector (if any)

If the letter is not in the word vector set at that position, exit with a failed match. Otherwise, proceed and, if we exit the loop naturally, return True indicating a match.

Matching Words

With the rules implemented, we can now write the search function that filters the list of words given the responses we’ve gotten back from Wordle.

def match(word_vector, possible_words):

return [word for word in possible_words if match_word_vector(word, word_vector)]The matcher merges the concepts we’ve just talked about into a single list comprehension that does the checking. Each word is tested against word_vector with match_word_vector.

Iterating the Answer

Finally we need a little UI that can repeatedly query for the answer we want.

def solve():

possible_words = WORDS.copy()

word_vector = [set(string.ascii_lowercase) for _ in range(WORD_LENGTH)]

for attempt in range(1, ALLOWED_ATTEMPTS + 1):

print(f"Attempt {attempt} with {len(possible_words)} possible words")

display_word_table(sort_by_word_commonality(possible_words)[:15])

word = input_word()

response = input_response()

for idx, letter in enumerate(response):

if letter == "G":

word_vector[idx] = {word[idx]}

elif letter == "Y":

try:

word_vector[idx].remove(word[idx])

except KeyError:

pass

elif letter == "?":

for vector in word_vector:

try:

vector.remove(word[idx])

except KeyError:

pass

possible_words = match(word_vector, possible_words)The solve function does a lot of the setup I’ve already explained. But after that, we loop up to ALLOWED_ATTEMPTS + 1, and with each attempt, we display the attempt we’re on and how many possible words remain. Then we call display_word_table to pretty print a nice table of the 15 highest-scoring matches. Then we ask for the word, and the response from Wordle to that word.

Next, we enumerate the response, making sure to remember the position of each answer, so we know where in the word it points to. The code is simple: we map each of the three response characters to the respective container (green to word_vector, etc.) and apply the rules we discussed earlier.

Finally, we override possible_words with the new list of matches from match, and loop again, displaying the now-reduced subset.

Trying it out

Starting it up by calling solve() (with some of the output elided for brevity):

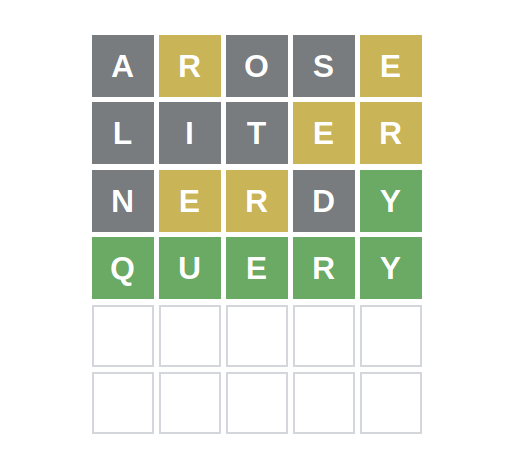

>>> Attempt 1 with 5905 possible words arose | 0.43 raise | 0.42 ... etc ... Input the word you entered> arose Type the color-coded reply from Wordle: G for Green Y for Yellow ? for Gray Response from Wordle> ?Y??Y Attempt 2 with 829 possible words liter | 0.34 liner | 0.34 ... etc ... Input the word you entered> liter Response from Wordle> ???YY Attempt 3 with 108 possible words nerdy | 0.29 nehru | 0.28 ... etc ... Input the word you entered> nerdy Response from Wordle> ?YY?G Attempt 4 with 25 possible words query | 0.24 chewy | 0.21 ... etc ... Input the word you entered> query Response from Wordle> GGGGG Attempt 5 with 1 possible words query | 0.24

Summary

- Comprehensions are powerful Python tools

-

They can combine iteration with filtering, but if you abuse the feature by stacking too many

forloops, or too manyifclauses, you run the risk of making your code much, much harder to read. Avoid nesting more than a few of each. - Sets are a major asset to Python

-

The ability to act on, and know when, to use set membership makes for more stable, more mathematically correct, and more succinct code. It’s used to great effect here – do not neglect sets!

- You can express the entire search space with regular expressions

-

Although I did not explore it, the act of matching (or not matching) characters is what regular expressions do best. Think of a way you can rewrite the matcher and word vectoring using regular expressions.

- The

itertoolsandcollectionsmodule contain useful helpers -

You can accomplish a lot with basic Python if you know how to use the builtin modules.

itertoolsespecially is very useful if you want think about lazily or iteratively computing values.